How I Build Presentations: Series Index

This is the index for the series of blog posts on how I build presentations:

Inline methods with ThreadPool and WaitCallback

Slightly for my own memory (since I will forget in future and at least it's available here), but for an upcoming training session which I am presenting, I wanted to be able to inline a method when using the .NET ThreadPool’s QueueUserWorkItem method which requires a WaitCallback pointing to a method. I did this using the lambda expression support in .NET 3.0+ and it looks like this:

static void Main(string[] args)

{

Console.WriteLine("Starting up");

ThreadPool.QueueUserWorkItem(new WaitCallback(f =>

{

Console.WriteLine("Hello for thread");

Thread.Sleep(500);

Console.WriteLine("Bye from thread");

}));

Console.ReadKey();

}

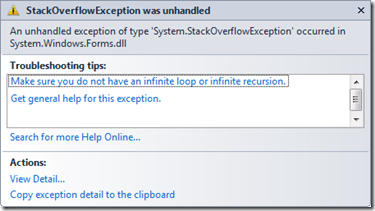

StackOverflow with ListBox or ListView

I have been writing some multi-threaded code recently where I was initially adding items to the .NET ListBox control and then later changed to the ListView control. In both cases my code I would get a StackOverflow exception fairly consistently when I added the 224th item (my first two items I added manually so it was the 222nd item added via a separate thread). The first troubleshooting tip is that you do not have an infinite loop, which I could confirm that it did not have.

I have been writing some multi-threaded code recently where I was initially adding items to the .NET ListBox control and then later changed to the ListView control. In both cases my code I would get a StackOverflow exception fairly consistently when I added the 224th item (my first two items I added manually so it was the 222nd item added via a separate thread). The first troubleshooting tip is that you do not have an infinite loop, which I could confirm that it did not have.

So the first thing I tried, was to limit the number of items which would be added with each button click. Doing this enabled me to go well over the 224/222 limit from before – thus eliminating any thoughts of limit on the number of items the controls could handle.

After some other failed tests I found out it was how I was handling the cross thread communication, being that I had a separate thread add the items to the control which was created on the applications main thread. To handle to cross threaded communication I kept calling this.BeginInvoke, however I never called this.EndInvoke which a lot of places seem to say it is fine. However at some point it will fail, with the StackOverflow exception – that point is dependant on a number of factors (including the worst factor of all: timing, making this one of those issues that may only appear in the field).

My solution was simple, change the standard this.Invoke method and the issue went away.

For the search engines the full exception is “An unhandled exception of type 'System.StackOverflowException' occurred in System.Windows.Forms.dll”.

Most Valuable Indian

_thumb.png) So yesterday I posted about myself getting the MVP award, well today it got better as my friend, co-worker, fellow VSTS Ranger and S.A. Architect lead: Zayd Kara has also been awarded a MVP for his work with Team System! Congratulations Zayd!

So yesterday I posted about myself getting the MVP award, well today it got better as my friend, co-worker, fellow VSTS Ranger and S.A. Architect lead: Zayd Kara has also been awarded a MVP for his work with Team System! Congratulations Zayd!

And the award goes to...

With the count down clock at T-10 days to my sabbatical trip an email popped into my mail box… it was an email from Microsoft congratulating me on getting the MVP (Most Valuable Professional) award for my work with Team System!

What is this MVP Award?

The Microsoft MVP Award is an annual award that recognizes exceptional technology community leaders worldwide who actively share their high quality, real world expertise with users and Microsoft… With fewer than 5,000 awardees worldwide, Microsoft MVPs represent a highly select group of experts. MVPs share a deep commitment to community and a willingness to help others. They represent the diversity of today’s technical communities. MVPs are present in over 90 countries, spanning more than 30 languages, and over 90 Microsoft technologies. MVPs share a passion for technology, a willingness to help others, and a commitment to community. These are the qualities that make MVPs exceptional community leaders. MVPs’ efforts enhance people’s lives and contribute to our industry’s success in many ways. By sharing their knowledge and experiences, and providing objective feedback, they help people solve problems and discover new capabilities every day. MVPs are technology’s best and brightest…

Richard Kaplin, Microsoft Corporate Vice President

So this is a great honour for me to be welcomed into a group of people who I look up to and respect :) You can see my new MVP profile up at https://mvp.support.microsoft.com/profile/Robert.MacLean

Presentation Data Dump

Over the last year I have done a number of presentations and recently some of uploaded them (unfortunately I cannot upload all, as some contain NDA information) to SlideShare so here is the collection of presentations from the last 15 months or so, in no particular order:

- ASP.NET Dynamic Data

- JSON and REST

- What’s Microsoft CRM all about?

- Source Control 101

- SQL Server Integration Services

- ASP.NET MVC

- What’s new in the .NET Framework 3.5 SP 1

Click the read more link to see and download them...

ASP.NET Dynamic Data

JSON and REST

What’s Microsoft CRM all about?

Source Control 101

SQL Server Integration Services

ASP.NET MVC

What’s new in the .NET Framework 3.5 SP 1

T-34 days and counting...

This morning I got up for a quick cycle and as I road up the last big hill before I got home the sun really started to beat down on me and the swet changed from cooling moist to dripping. This is all at 6am, which is normal for a South African summer day, in fact our winters, in Johannesburg, aren’t too bad too. It normally is around single digits in winter at night and the days go up to 14 or so degrees. I guess that is why Willy-Peter decided to send me this picture – it’s a warning that better go shopping for a jacket or nine.

This morning I got up for a quick cycle and as I road up the last big hill before I got home the sun really started to beat down on me and the swet changed from cooling moist to dripping. This is all at 6am, which is normal for a South African summer day, in fact our winters, in Johannesburg, aren’t too bad too. It normally is around single digits in winter at night and the days go up to 14 or so degrees. I guess that is why Willy-Peter decided to send me this picture – it’s a warning that better go shopping for a jacket or nine.

T-40 days and counting...

In 40 days I will be starting a very exciting adventure, that being flying to Canada and America for 3 weeks of what is being referred to as the Rangers Sabbatical. The what? you may be asking yourself, I have posted previously on my Microsoft VSTS Rangers project involvement. Well in January I will be heading to hang out with with Willy-Peter Schaub at the MCDC (Microsoft Canada Development Centre) in Vancouver Canada and then Chuck Sterling at Microsoft Corp HQ in Redmond, USA.

I am hoping to be able to blog a lot about this trip, although I doubt that I will be allowed to share photo’s of that central server that Microsoft runs that all traffic on the internet run though which is built on Linux (that is a joke for those with humour issues), since this will be the first time I will be going to north America and so this is really going to be an exciting adventure.

Google Maps City More Info

I was answering a question on World Cup 2010 Dizcus and found an amazing feature on Google Maps. I was looking for maps of cities in SA, and I stumbled across this cool more info link.

More info takes you to a portal for the city with information on the time & timezone, a high view map of the area, photos and videos of the town, popular places and related maps. This is a great resource when you are looking for information on a city that you have never been too! Below is a screen shot from my home town of Johannesburg.

Dev4Devs - 28 November 2009

Well today is the day! Dev4Dev’s is happening at Microsoft this morning and I will be speaking on 10 12 new features in the Visual Studio 2010 IDE. For anyone wanting the slide deck and demo application I used you can grab them below.

The slide deck is more than the 6 visible slides, there is in fact 19 slides which cover the various demos and have more information on them so you too can present this to family and friends :)